Judging Phishing Under Uncertainty: How Do Users Handle Inaccurate Automated Advice?

Abstract

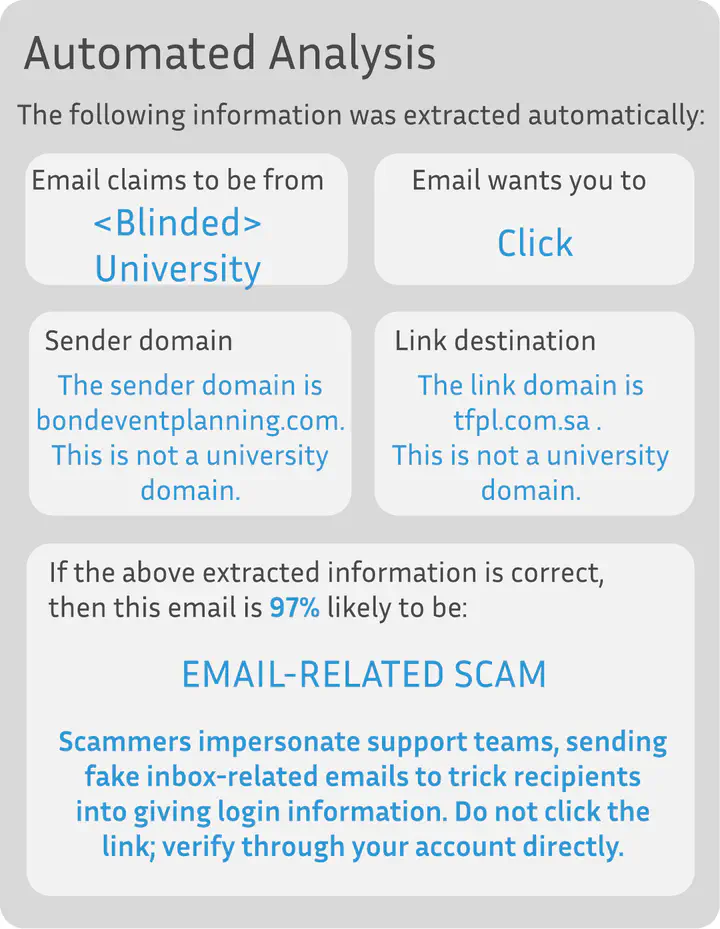

Providing accurate and actionable advice about phishing emails is challenging. The majority of advice is generic and hard to implement. Phishing emails that pass through filters and land in user inboxes are usually sophisticated and exploit differences between how humans and computers interpret emails. Therefore, users need accurate and relevant guidance to take the right action. This study investigates the effectiveness of guidance based on features extracted from emails, which even in AI-driven systems can sometimes be inaccurate, leading to poor advice. We examined three conditions: control (generic advice), perfect advice, and realistic advice, through an online survey of 489 participants on Prolific, and measured user accuracy and confidence in phishing detection with and without guidance. Our findings indicate that having advice specific to the email is more effective than generic guidance (control). Inaccuracies in the guidance can also impact user decisions and reduce detection accuracy.