PhishEd

Phishing is one of the most effective vectors for tricking users into providing sensitive information, such as account details, to attackers who then use the information to harm users, organizations, and society at large. Recent attacks, such as the Colonial Pipeline shutdown in the US can be attributed to phishing. In the UK, 86% of businesses reported receiving phishing attacks [1] and while 76% of UK users believe that they can recognize and avoid suspicious links in emails [2], our own research shows that only about 8% can accurately read a URL [3]. The staggering £53.7 million lost in the UK in 2020 to impersonation fraud also attests to the problem [4].

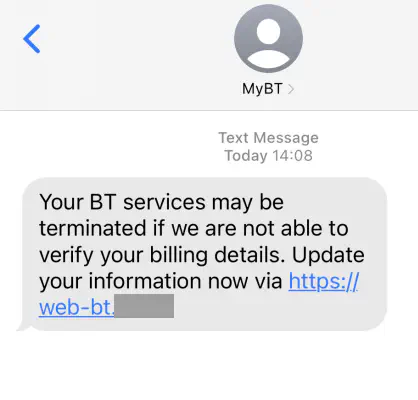

Employer-provided training tends to be delivered either up-front or given after the employee has fallen for a mock phishing attack. These approaches do work, but they are very employer-centric in that employers choose the time and content of the training. Delivering the same training to everyone also forces a compromise between comprehensively covering topics and containing detailed actionable guidance. For example, training commonly advises users to “look at the destination of links” but does not have the space to explain how to do so. Users in this situation have virtually no agency or control and lack the ability to shape their own learning to match their needs. Users also experience phishing as an infrequent unexpected event that is mixed in with other activities. Phishing by its nature is designed to appear urgent or threatening. A user receiving such a message may be worried or scared about what might happen if they were to ignore it. Currently their only options are to delete the potential phish (scary) or report it and ask for advice (slow).

In this project, we propose a novel phishing-advice tool, PhishEd, which accepts reports from users of potential phishing emails, uses AI to parse out contextual phishing features, and quickly responds back to the user with advice based on the content of the reported email. to explain the reasoning or frame the decision the user needs to make. For example, a reported phishing might contain “HMRC” but have links leading to Dropbox. The auto response would inform the user that the email is not from HMRC (DKIM) and the links lead to Dropbox which HMRC would never use. It would also provide examples from the email to evidence these claims. The objectives of this project are: (i) help users confidently make safety decisions, (ii) teach them skills about how to detect fraud, and (iii) make them want to report phishing in the future.

- NCSC Cyber Security Breaches Survey 2020.

- UK Consumer Digital Index 2019.

- S.S. Albakry, K. Vaniea, M.K. Wolters; What is this URL’s Destination? Empirical Evaluation of Users’ URL Reading; In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems. 2020.

- UK Finance, Fraud – The Facts 2021.

Read more about the project at the: REPHRAIN PhishEd website.